Publications

A collection of my research work. † denotes equal contribution.

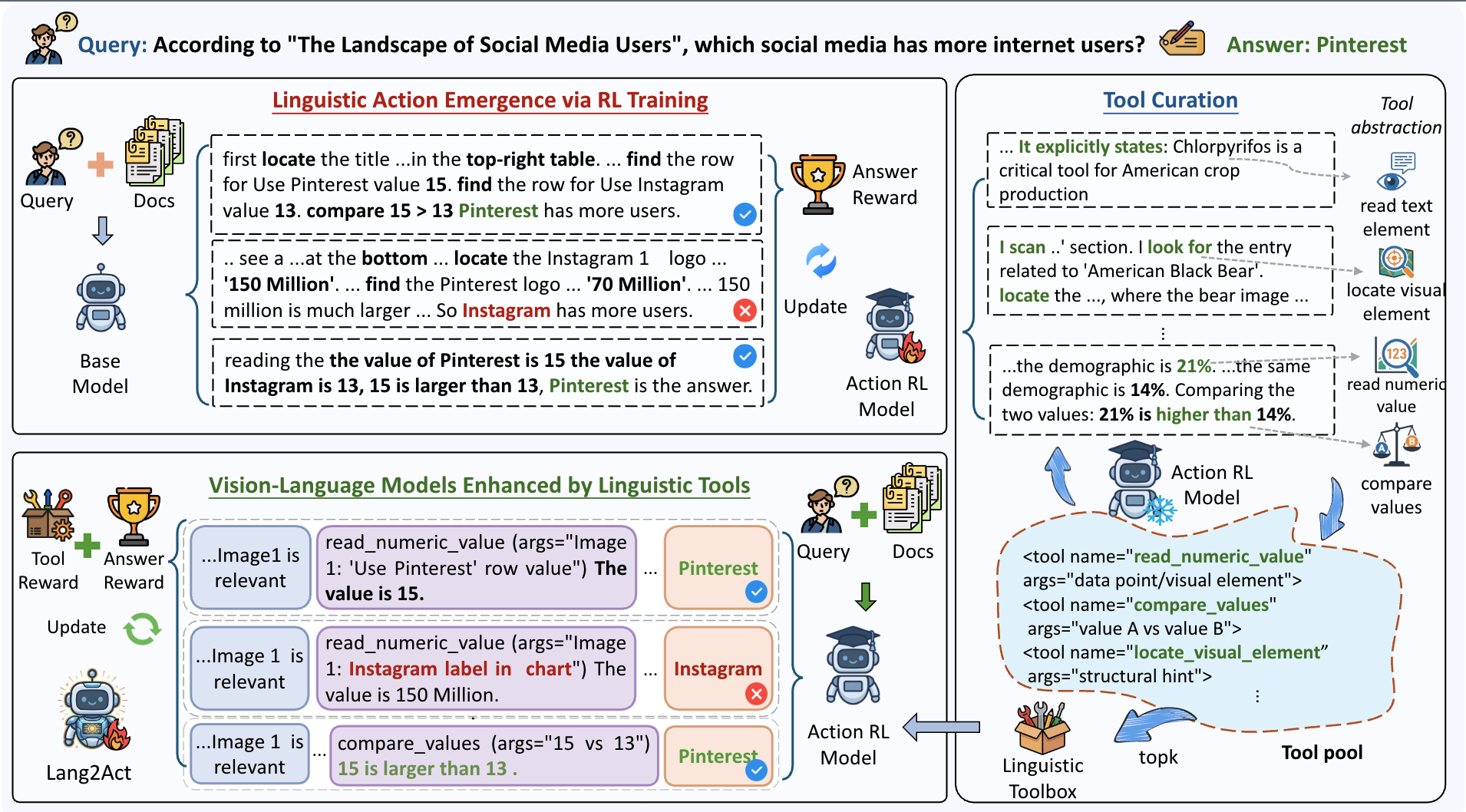

Lang2Act: Fine-Grained Visual Reasoning through Self-Emergent Linguistic Toolchains

Yuqi Xiong†, Chunyi Peng†, Zhenghao Liu, Zhipeng Xu, Others

ACL 2026

This paper introduces Lang2Act, a framework that improves fine-grained visual reasoning by letting VLMs use self-emergent linguistic toolchains instead of fixed external visual tools. It further uses a two-stage RL training scheme to first discover reusable linguistic tools and then optimize the model to apply them for downstream visual question answering.

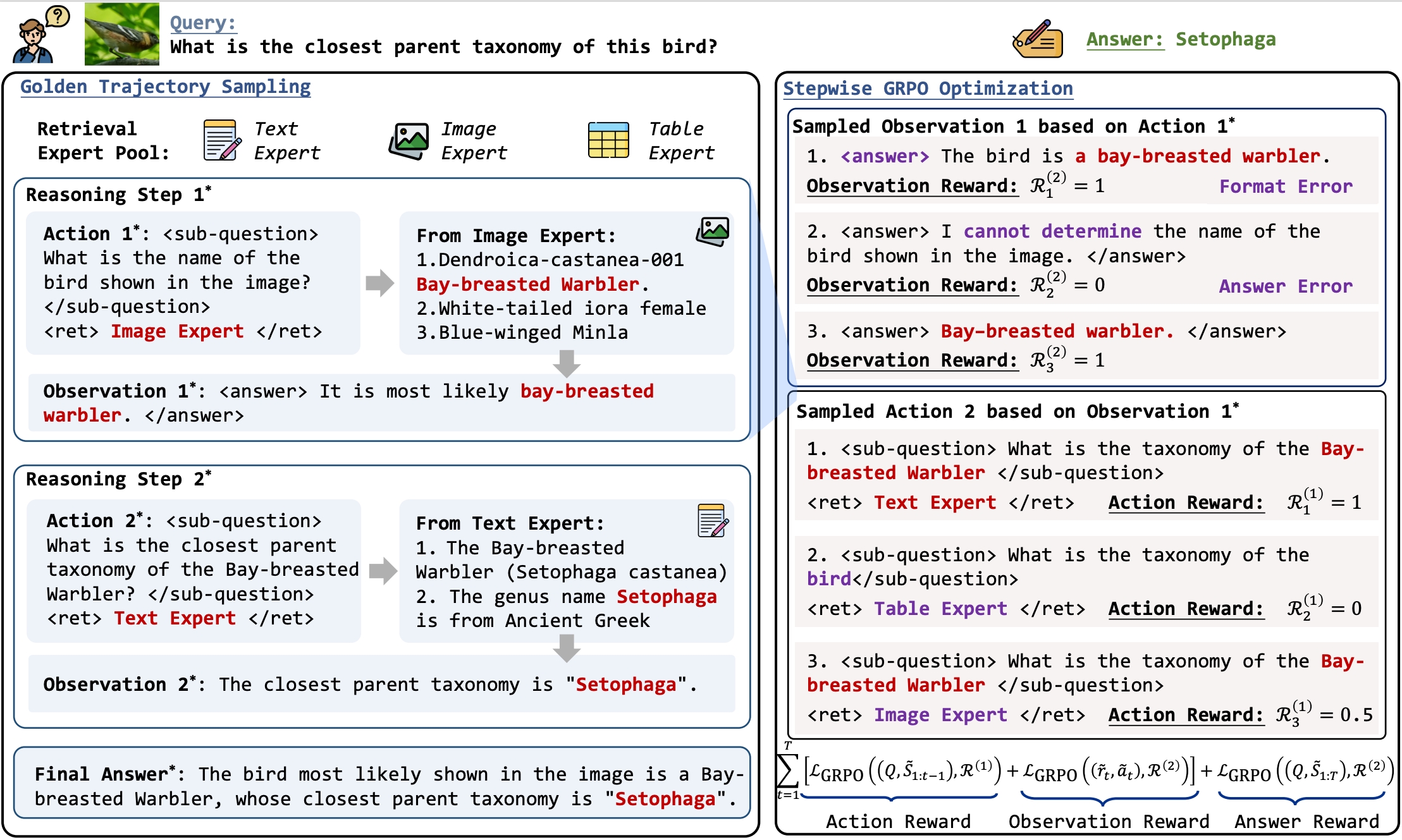

Mixture-of-Retrieval Experts for Reasoning-Guided Multimodal Knowledge Exploitation

Chunyi Peng†, Zhipeng Xu†, Zhenghao Liu, Yukun Yan, Others

SIGIR 2026

This paper introduces MoRE, a multimodal RAG framework that lets MLLMs dynamically coordinate text, image, and table retrieval experts during reasoning. It further proposes Step-GRPO to train expert routing with fine-grained stepwise feedback, improving both answer accuracy and retrieval efficiency.

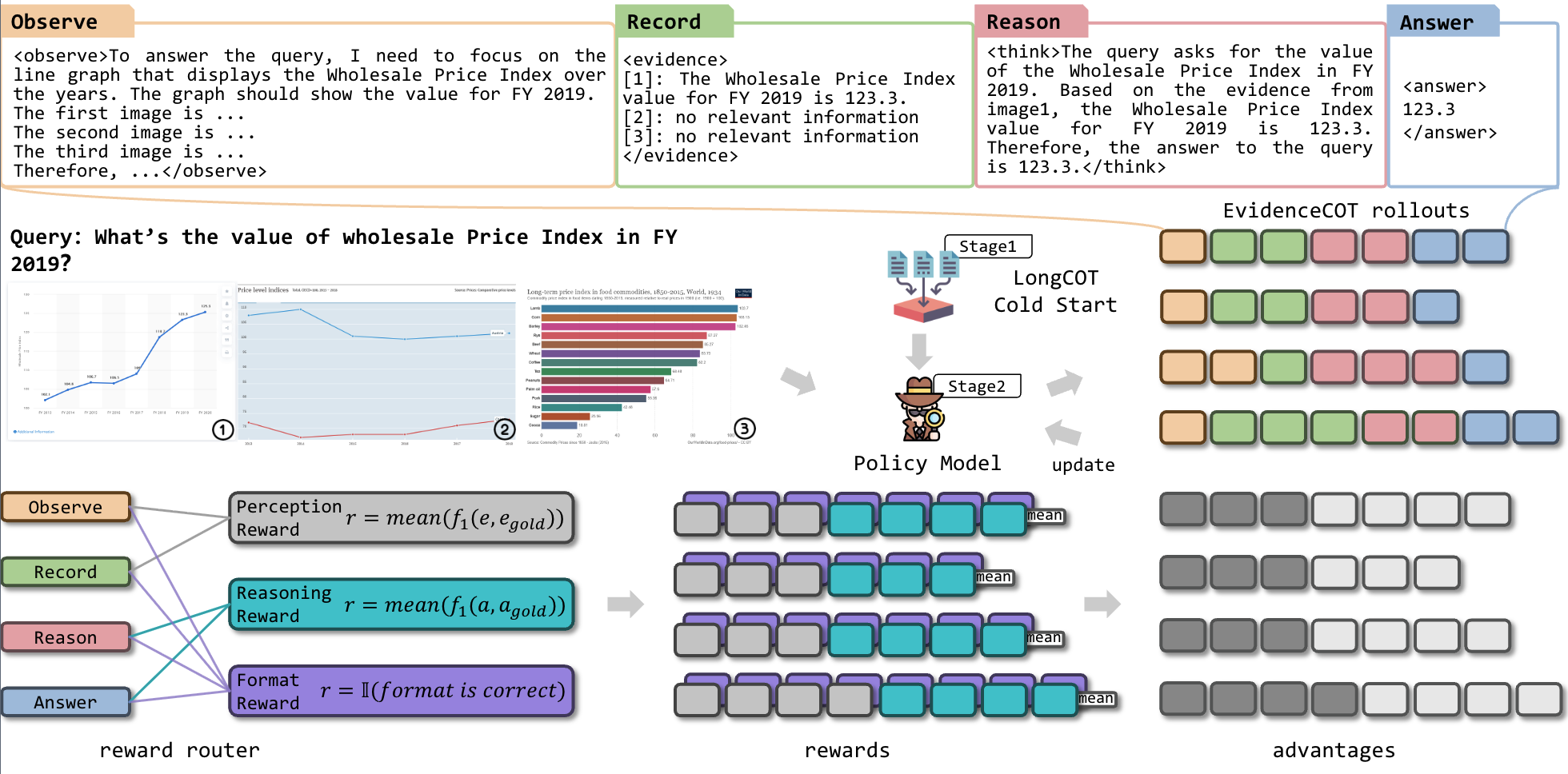

VisRAG2.0: Mitigating Visual Hallucinations via Evidence-Guided Multi-Image Reasoning in Visual Retrieval-Augmented Generation

Yubo Sun†, Chunyi Peng†, Yukun Yan, Zhenghao Liu, Others

Preprint 2025

This paper introduces EVisRAG, a visual retrieval-augmented framework that improves multi-image reasoning by explicitly collecting question-relevant evidence from retrieved images before generating an answer. It also proposes RS-GRPO, a reward-scoped training strategy that strengthens evidence grounding and reduces visual hallucinations in visual question answering.

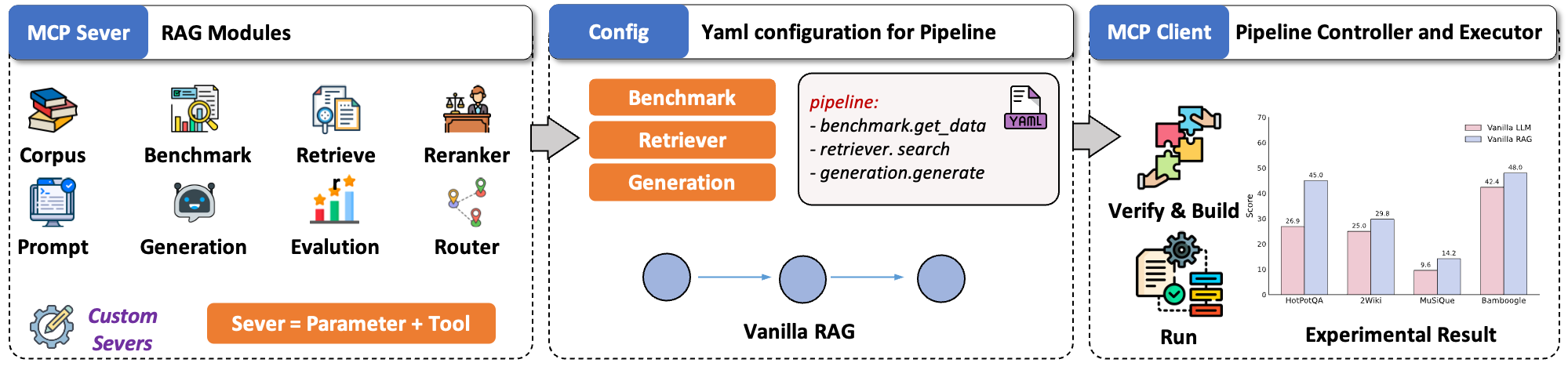

UltraRAG v2: A Low-Code MCP Framework for Building Complex and Innovative RAG Pipelines

Sen Mei, Haidong Xin, Chunyi Peng, Yukun Yan, Others

OpenBMB 2025

A Low-Code MCP Framework for Building Complex and Innovative Retrieval-Augmented Generation systems.